Signals and Field Notes #12

Learning and Innovation Observed....Gratias vobis ago quod legitis

Claude-Powered Agent Apparently Deletes Company Database, Debases Itself Further in Confession: Couple things:

1. You need to go read the “confession” - hysterical.

2. I need someone to produce a chart that shows all the times that either Silicon Valley or The Simpsons, accurately predicted the future.

“On Saturday, the founder of (what else?) a SaaS business, PocketOS, wrote one of those long X posts labeled an “article” about an incident his company endured while vibe coding with a Claude Opus 4.6-powered version of Cursor, the AI coding assistant that might soon become a wholly owned subsidiary of SpaceX. The agent, it seems, drastically overstepped and deleted the production database for PocketOS, which triggered an even deeper disaster when a cloud provider allegedly deleted the backups.”

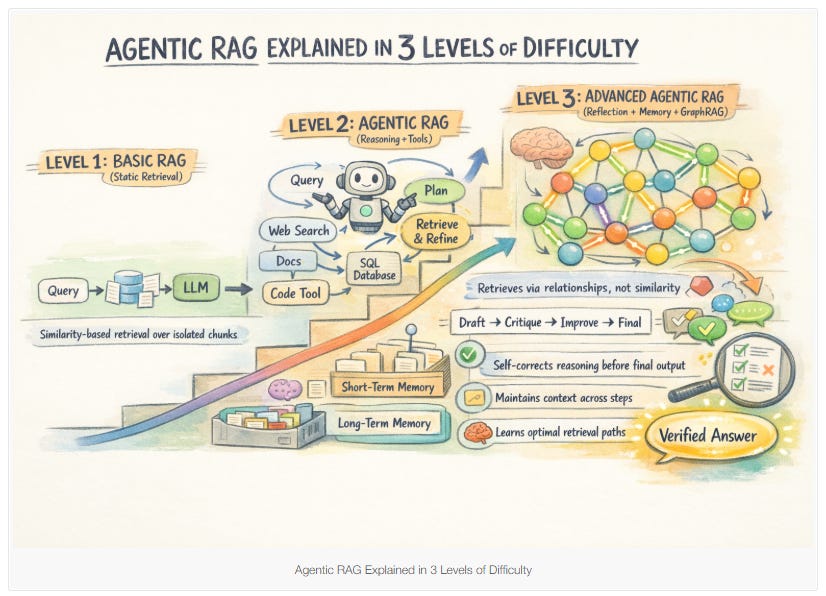

Agentic RAG Explained in 3 Levels of Difficulty: Here’s the thing - I like this article and I actually think we need more like it. I hate the “ultimate cheat sheets” because they’re never ultimate and they don’t teach the basic fluency people need. The thing that struck me while I was reading this though was how apt the analogy is of AI being like a really trainable intern with perfect memory. The steps here to build agentic RAG and pretty much how you’d teach someone to do solid research. Of course with AI, once you teach them, they will never forget and you never have to pay them and they can work 24/7. Of course, they’ll never grow in soft skills, become visionary leaders or strike out on their own to create a new market. So there’s that.

Atlassian and Twilio Crush the Quarter, Accelerate. Is the SaaSpocalypse Over?: Great read…hardest hitting points:

The companies selling to AI builders (Twilio, Cloudflare, Snowflake) are picking up tens of thousands of new customers because every AI-native startup needs their infrastructure. The companies selling per-seat application software to humans (Atlassian, HubSpot) are seeing logo growth slow even as they extract more from existing accounts.

AI is re-accelerating some mature B2B leaders, when deployed as expansion, not deflection.

Infrastructure for AI agents is its own boom.

There’s hope here but it requires adaptation.

Change is inevitable. Adaptation is not.

Ask.com shuts down after 30 years: …and an era ends…

AI can cost more than human workers now: I can’t tell if this means that irony is dead or if its not dead….

IT budgets are getting blown out as some companies increasingly spend more on AI than on employees’ salaries.

Why it matters: Maybe human labor will be more cost-efficient after all.

What they’re saying: “For my team, the cost of compute is far beyond the costs of the employees,” Bryan Catanzaro, vice president of applied deep learning at Nvidia, told Axios.

Uber’s chief technology officer already blew through his full 2026 AI budget due to token costs, according to The Information.

Maryland becomes first state to ban surveillance pricing in grocery stores: Fear the Turtle. Well done MD.

McKinsey’s new AI report argues the productivity payoff is real but conditional: This just nails it and why the companies that are laying off people because of immediate gains from AI doing the same old thing faster, are going to be hurting or gone in 24-36 months > > “The report, ‘AI productivity gains and the performance paradox’, argues that most current AI applications are ‘tools that accelerate existing work’ but ‘largely preserve underlying workflows’, and that the larger productivity gains will only emerge once organisations redesign processes around AI rather than simply bolting it on top. The report’s central historical analogy is electricity in factories. “When electricity first arrived in factories, many businesses simply replaced the steam engine with an electric motor, capturing efficiency gains but leaving the line-shaft layout unchanged,” the authors write. “The breakthrough came later, when small motors enabled managers to rearrange machines around workflows, and ultimately when companies redesigned their factories around electricity, creating new operating models.”

Spotify introduces verified artist badges to help distinguish humans from AI: Regardless about how you feel about AI being used in the creation of something like music, this move is a signal. You can just see it coming - the labels like “100% human generated” and “human-made” will become the bespoke content of the future. Different economic classes will have access to varying percentages of “human-made” content. This will all be at least partially untrue or at least imprecise because we all use tech to create so we’ll have to be continually negotiating where that line is. In an ideal world, it might mean greater support for artists but how many times do we get that?

Red Hat’s OpenClaw maintainer just made enterprise Claw deployments a lot safer: So here is why this is a signal - look at the speed with which Claw moved from a cool thing that one person did in the wild to an operational tool deployed at large. I don’t think this is an isolated development. This isn’t vibe coding but AI-augmented coding done by SMEs. What does this mean for orgs? It means that the need for sandboxes, and the willingness to experiment (with rigor) will become more important than ever. Now 2nd order impacts…procurement has to change, security has to adapt to provide safe spaces, HR will have to adapt how people are rated and assessed…all of these pieces and more will have to adapt if you or your org want to be able to move at AI speed in business terms > > “On Tuesday, Red Hat principal software engineer Sally O’Malley released a new open source tool called Tank OS to make it easier to deploy and manage OpenClaw agents more safely. “This was a fun project that I put together on the weekend that I knew would be a really good fit for AI and where we’re going,” she told TechCrunch, adding that she wanted to give it “to the masses.”

Introducing talkie: a 13B vintage language model from 1930: I love this idea of temporally-limited LLMs. Here’s why - if we can go back and apply constraints to problems from the past and see where and maybe how those temporal locations managed to solve issues with what they had - that info may point us toward lessons in what constraints are really important and how to overcome or respond to them. Also, I find the phrase “vintage LLMs” to be very Gibson-esque. “Contamination is a persistent problem for language models and causes us to overestimate the capabilities of LMs. Vintage LMs are contamination-free by construction, enabling unique generalization experiments, like examining whether a model with no knowledge of digital computers can learn to code in a modern programming language. While vintage models dramatically underperform models trained on web data (which includes code), we’ve found that they are slowly but steadily improving at this task with scale.”

Mistral AI launches Workflows, a Temporal-powered orchestration engine already running millions of daily executions: Shocker - tech company sees tech as biggest problem to deploy tech > > “The product, which launches as part of Mistral’s Studio platform, is the company’s clearest articulation yet of a thesis that is quietly reshaping the enterprise AI market: that the bottleneck for organizations adopting AI is no longer the model itself, but the infrastructure required to run it reliably at scale.” > > Look, at one level, infra will also be a critical limiter BUT if you have great tools sitting on great infra BUT you haven’t done the hard work of org design/change, then you’ll just be doing the same thing faster. I’d argue that without the org design/change piece done, how will you know what infra you’ll need?

The global edtech boom is fading as investors look elsewhere: I’m going to call this a downstream signal for the corporate training market > > “Global edtech investment peaked at $16.7 billion in 2021, fueled by lockdowns that kept millions of children out of classrooms. By 2025, venture capital had plummeted to less than $3 billion, according to Tracxn, a Bengaluru-based platform that tracks global startup funding.”

The Age of AI means we need to throw out our old KPIs and replace them with new ones: Sometimes you have to get into an article to see if you’re going to like it, not so with this one. Right off the bat Natalie Nixon is saying more eloquently what I’ve been sreaming - that your activity is not your value and that AI is coming for your to do list > > “We are living through a fundamental shift in what work is for. As AI takes on more routine cognitive tasks, the uniquely human capacity to imagine, connect, and create meaning becomes the primary source of organizational value. Yet most companies are still measuring performance metrics prioritized for a different era: inventory turnover, cost per lead, and utilization rates. These metrics were designed to optimize extraction. They are poorly equipped to cultivate imagination.” She’s also spot on that what an org measures is a prime indicator of what it values. I also like her framing that her alternative KPIs aren’t “replacements for financial performance metrics. They are the upstream investments that make sustainable performance possible.” (That holds a separate issue though and that’s do we have the accounting systems to account for and/or value these investments? Looking at some of her potential KPIs, I’m reminded of this passage from Les Mis by Victor Hugo > > “One is not idle because one is absorbed. There is both visible and invisible labor. To contemplate is to toil, to think is to do. The crossed arms work, the clasped hands act. The eyes upturned to Heaven are an act of creation.”

Chuck Jones’ The Dot and the Line Celebrates Geometry & Hard Work: An Oscar-Winning Animation (1965): Humans are cool.

That’s “curious” spelled out using images from Landsat - get yours here.

Thank you for posting the Silicon Valley clip! I'd totally forgotten that scene. As you point out, it's incredibly prescient! (The open question is whether the infamous middle-out algorithm will ever come to life.)